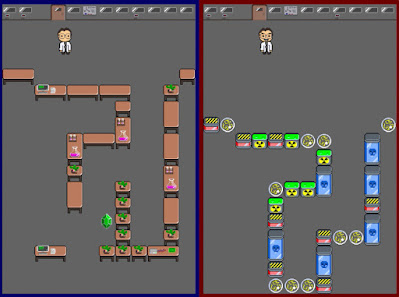

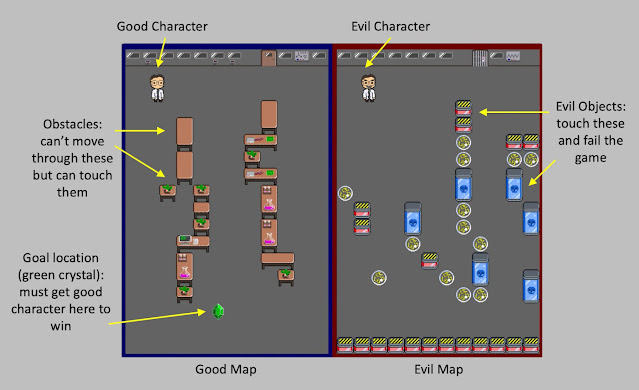

"Quantum Entanglement" was a team entry by Team MYCTL (for which I was part of) for PyWeek 33, (March 2022), a twice yearly video game development competition that gets competitors to build a complete game from scratch in seven days using the python programming language. This competition's theme was "My Evil Twin", and we made a 2D puzzle game where you control two scientists (one good and one evil) walking around within their respective labs, but where the controls for each character are linked to the same keyboard presses. The aim of the game is to direct the "good" scientist to a goal "green crystal" while ensuring that the evil scientist does not touch any of the "evil" items he has lying around in his lab space.

This was my first go at trying to "coordinate" a team entry (bigger than two members) and it was super enjoyable and a great learning experience: we had a good mix of different skills in the team and experience levels ranging from early programming experience to professional software devs, and from no game jam experience to very experienced game jam competitors. I learnt a good amount about managing a git repo with slightly larger teams (something I can say I'm probably not very good at!) and had a lot of fun working with others in different creative capacities: we even managed to get a game story going with a script and voice acting which was super cool :).

There were a lot of really cool puzzle concepts amongst the other entries for this year's jam: I think it was something to do with the theme that made for a lot of cool ideas, and lots around trying to control two characters (twins) simultaneously. I liked our concept, and would have liked to be able to spend more time trying to think through all the emergent mechanics that come out of the rule sets we came up with (but 7 days goes so quickly!).

The general premise of the mechanics was that each character is controlled by the same common set of ASDW direction commands: each character's environment is different however, so depending on how their character interacts with their map (i.e. one character runs up against a wall while the other continues to travel in free space) the "state" of each player (i.e. position in world) can be different. The general aim of the game is to guide the "good" character to a goal location (green crystal) while making sure the "evil" character does not touch any of the "evil" objects/squares in their map:

Using this mechanic, we developed a few different types of levels:

- Early levels just presented each world with enough clutter to make the player concentrate on both worlds at once, but you could win by just guiding each player along the same overall path

- Later levels would start to require that the player changes the relative offset between characters by pushing one up against a wall that the other did not have in their map: this would often be required to traverse a path that was offset from one world to the next.

- Final levels would start to build on this complexity by requiring some combination of these effects.